When you look up the UK university rankings, it’s easy to believe the numbers. A university ranked #3 must be better than one at #12, right? But what if the ranking isn’t about quality at all - but about how the data was twisted to fit a formula? The truth is, UK league tables don’t measure education. They measure how well universities game the system.

What Actually Gets Scored?

The main rankings - from The Guardian, The Times, and the Complete University Guide - all use similar ingredients, but mix them differently. Each one has its own secret sauce. The most common ingredients? Student satisfaction, graduate salaries, research income, teaching hours, and staff-to-student ratios. But here’s the catch: not all of these things matter to you as a student.

Take student satisfaction. It’s often weighted at 20-30% of the total score. That sounds fair - if students are happy, the experience must be good. But surveys are self-reported, often done right after exams, when students are tired or frustrated. A university that lowers its entry requirements to attract more applicants might see satisfaction scores rise simply because students are less stressed. Meanwhile, a top-tier research school with high entry standards might score lower because its students are overworked. The system rewards volume over rigor.

The Research Funding Trap

Research income is another major factor. It’s supposed to reflect academic excellence. But in reality, it’s a proxy for how well a university lands big government grants. Russell Group universities dominate here. They have decades of connections, dedicated grant-writing teams, and labs built for large-scale projects. A small university with brilliant teaching and strong student outcomes might get zero points in this category because it doesn’t run particle physics experiments or climate modeling projects.

Here’s the real issue: research funding doesn’t improve undergraduate teaching. A professor who spends 60% of their time writing grant proposals isn’t grading essays or holding office hours. Yet, a university with high research income gets a higher ranking - even if undergraduates get less personal attention.

Graduate Salaries: A Flawed Metric

Graduate salaries are used in almost every ranking. The idea is simple: if graduates earn more, the degree must be better. But this metric ignores geography, gender, and field of study.

For example, a university in London might rank higher because its graduates work in finance or tech - industries that pay well. A university in the Midlands that produces excellent teachers, nurses, or social workers might rank lower, even though those graduates have stable careers and high job satisfaction. The ranking doesn’t care if your job matters - only how much you’re paid.

Also, salary data is collected 15 months after graduation. That means it misses people who went into further study, took time off to travel, or started businesses. A student who spends two years building a startup won’t show up in the stats. But their future earnings might be far higher than someone who took a corporate job right after graduation.

The Staff-to-Student Ratio Lie

You’ve probably heard that lower staff-to-student ratios mean better teaching. But what does “staff” mean? It includes lecturers, researchers, administrators, and even part-time tutors who teach one module a year. A university that hires 50 temporary lecturers for one-off courses can artificially lower its ratio - even if no one is available for one-on-one help.

Meanwhile, a university that invests in full-time academic advisors, career coaches, and mental health support won’t get credit. Those roles don’t count in the official ratio. So the ranking system rewards quantity of bodies over quality of support.

What Gets Ignored Completely?

There are huge parts of university life that never make it into the rankings:

- Student mental health services

- Accessibility for disabled students

- Quality of accommodation

- Local community engagement

- Internship placement rates outside big cities

- Retention rates for students from low-income backgrounds

These aren’t minor details - they’re make-or-break factors for many students. But since they’re hard to measure, they’re left out. The result? Rankings push universities to focus on what’s counted, not what matters.

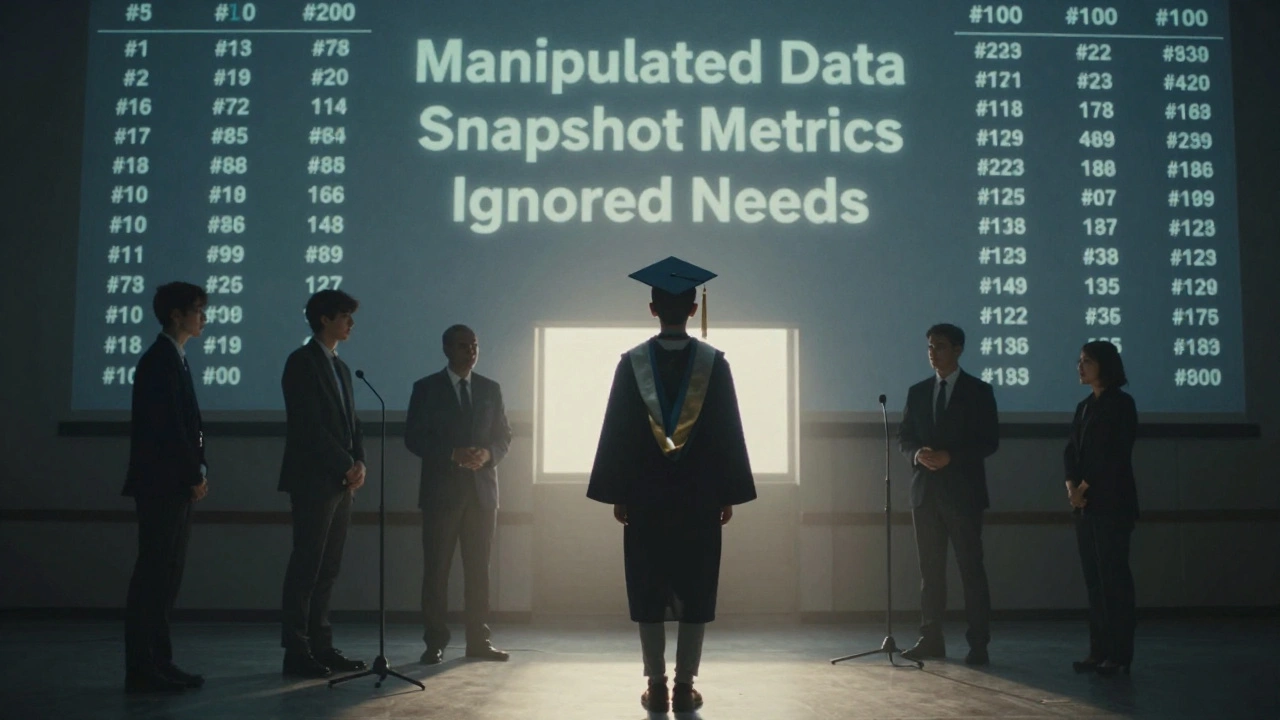

How Universities Game the System

It’s not just flawed metrics - it’s active manipulation. Universities now have teams dedicated to “ranking optimization.” They know exactly which levers to pull.

For example:

- They limit admissions to only high-achieving students to boost entry tariff scores.

- They encourage students to complete satisfaction surveys by offering raffles or free coffee.

- They hire temporary staff just before the data collection window to lower the staff-to-student ratio.

- They reclassify courses to shift students into higher-paying graduate categories.

One university in the North West reportedly hired 120 temporary lecturers for one semester to improve its ratio. The next year, they let them all go. No one noticed - because the ranking only looked at one snapshot in time.

What Should You Look For Instead?

If you’re choosing a university, ignore the overall rank. Look deeper:

- Check the teaching quality score - not just satisfaction. What’s the actual feedback from students in your course?

- Look at graduate outcomes by subject. A university ranked 20th overall might be #2 in Social Work.

- Read student reviews on Unistats or StudentCrowd. Look for patterns - not just ratings.

- Ask about placement support. Do they help students find internships? Do they have partnerships with local employers?

- Visit the campus. Talk to current students. Ask: “Who do you go to when you’re struggling?”

The best university for you isn’t the one at the top of the table. It’s the one that supports you - not the one that optimized for a formula.

Why This Matters Beyond Rankings

These rankings influence government funding, student visas, and even international reputation. When universities chase rankings, they stop focusing on real education. They stop innovating. They stop listening to students.

And it’s not just students who lose. Society loses too. We need universities that train nurses, teachers, and community leaders - not just high-salary tech workers. But the current system pushes everyone toward the same narrow definition of success.

The rankings aren’t wrong - they’re incomplete. And that’s far more dangerous than being wrong.

Are UK university rankings accurate?

No - not in the way most people think. They measure how well universities meet specific data criteria, not how good the education is. A university can rank highly by manipulating metrics like student numbers, survey response rates, or temporary staff hires - even if teaching quality stays the same.

Do rankings reflect teaching quality?

Not reliably. Teaching quality is often measured through student satisfaction surveys, which are influenced by how easy a course is, not how challenging or valuable it is. A university that lowers standards to boost satisfaction scores can rank higher than one that pushes students harder.

Why do Russell Group universities always rank higher?

They dominate in research funding and graduate salary metrics, which carry heavy weight. But this doesn’t mean they offer better undergraduate teaching. Many Russell Group schools focus heavily on postgraduate research, leaving undergraduates with larger class sizes and less personal support.

Can a university improve its ranking without improving education?

Absolutely. Universities hire ranking consultants, adjust course codes to shift graduates into higher-paying categories, and hire temporary staff just before data collection. These tactics change the numbers - not the student experience.

What should I prioritize when choosing a university?

Look at subject-specific rankings, graduate outcomes in your field, student reviews, internship support, and campus resources. Ask current students about their real experience - not what the website says. A university ranked #10 overall might be #1 in your course.